I have decided to migrate my blog to a new site. Most of the previous content is still here. Few of the posts have been removed since they were no longer viable. Also the layout has been freshened

OpenInfra Days 2023 in Raleigh, NC

I am running OpenInfra Days in Raleigh next week. The event is going to be dominated by Red Hatters, but I also invited folks from other organizations to speak (NASA, Rackspace and more).The f

Stretching Kubernetes Architecture across Distributed Private Cloud

As a Solutions Architect and after all the years helping my clients select the right architectures for their use cases or requirements I have come up with a certain realization. My primary job is t

All-in-3 Openshift (OCP) cluster with OCS (storage) and CNV (Virtualization)

Another day, another “Edge” Architecture. This time let’s see how the minimum all-in-one OCP/OCS/CNV would have to look like. But first what are the key benefits: Okay, I got you

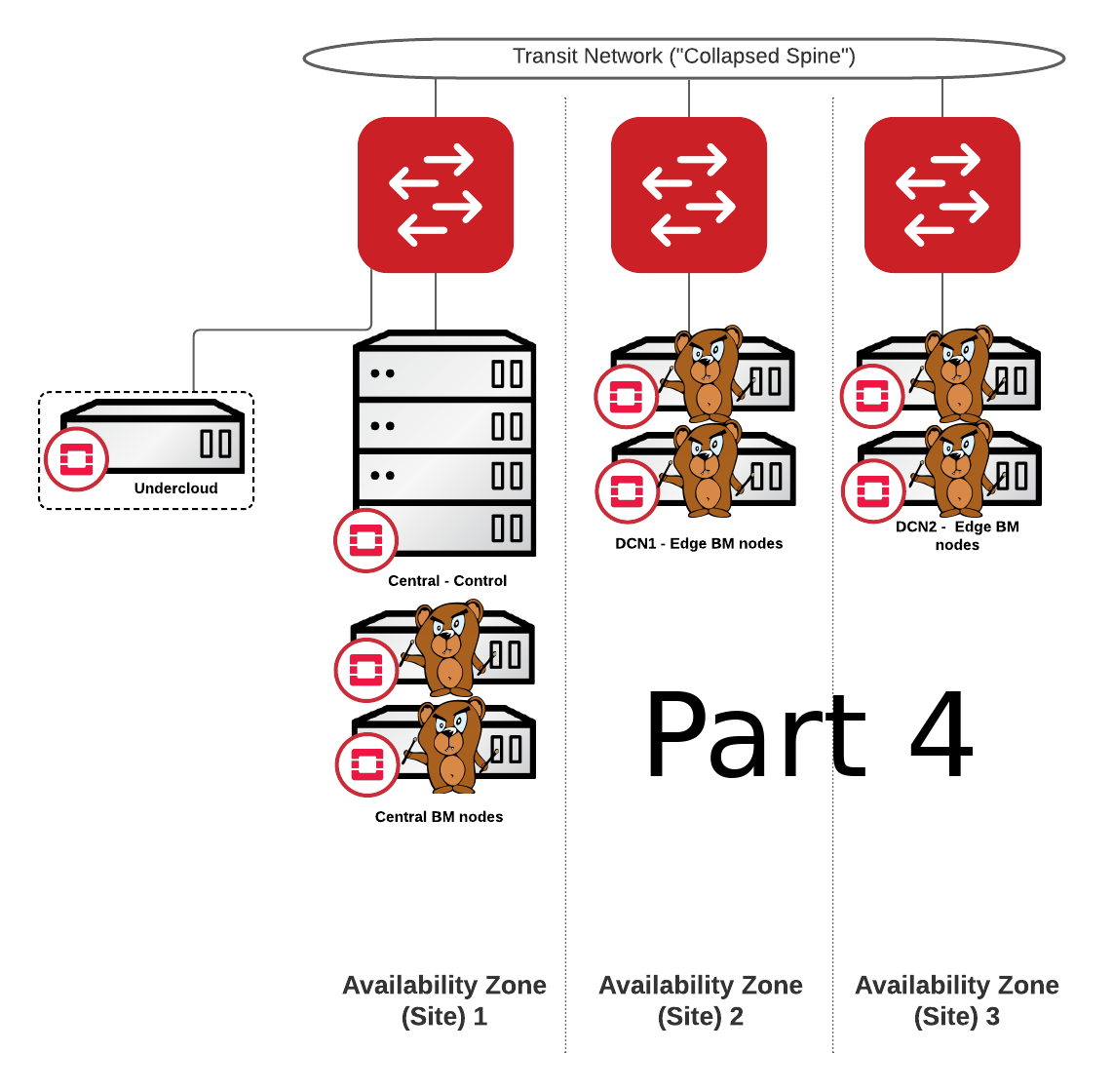

BMaaS Part 4 – Routed Networks / Bears at the edge

This is part 4 in the series of BareMetal-as-a-Service with Ironic. In this article I will focus on highly distributed architectures. Baremetal cloud as a service for the edge and large environment

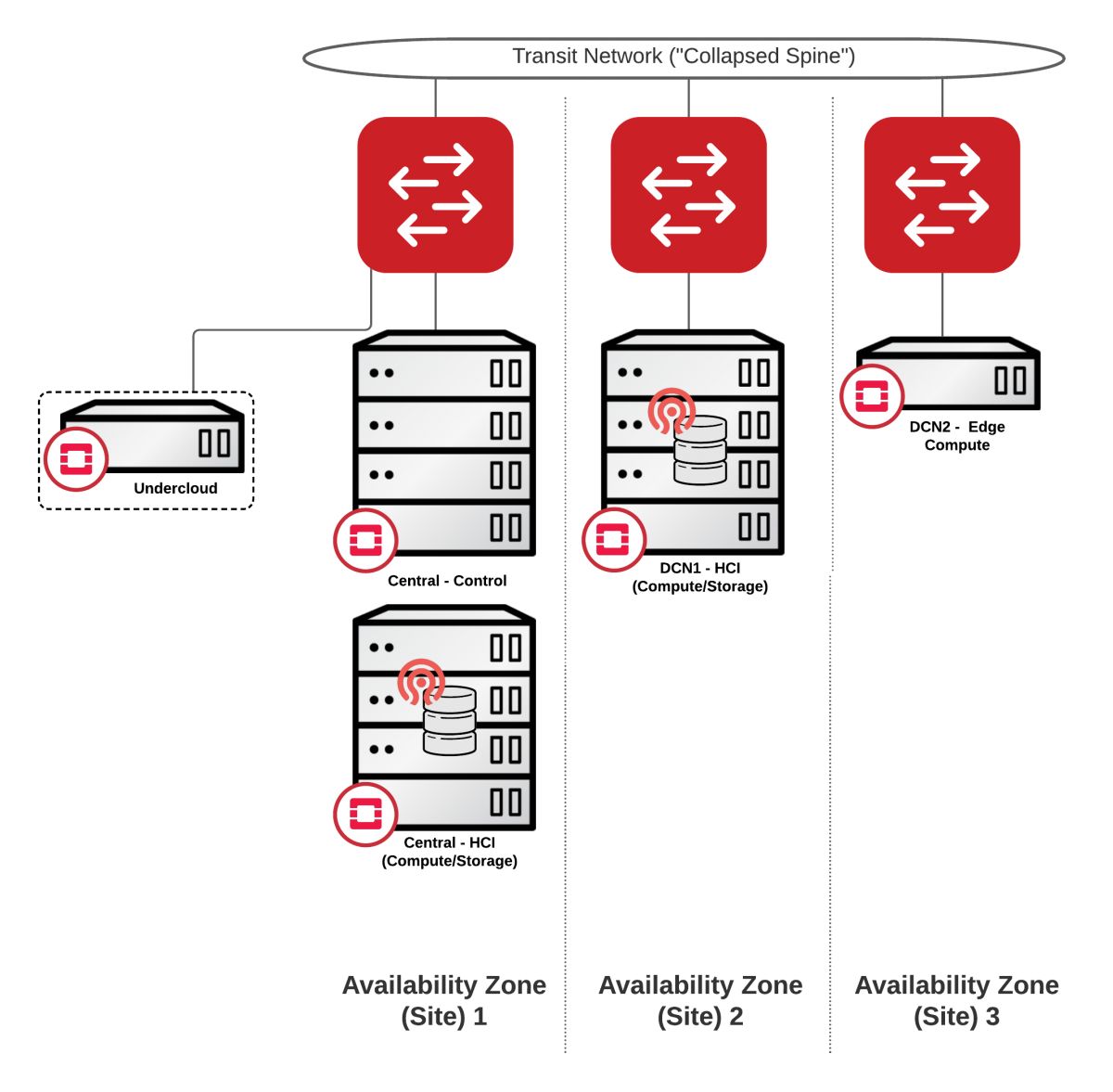

Distributed Private Cloud Infrastructure – DCN / Edge

I have hesitated to post anything about Red Hat OpenStack Edge since it got introduced in OSP13 simply because I found it quite difficult to consume. Also the storage situation back then was .. not

Networking-Ansible for Baremetal Consumption – How to create your network automation driver.

It is not a big deal for me to admit that I am a huge nerd when it comes to managing baremetal infrastructure in the datacenter. I feel like a dying breed in the times where everyone just wants to

OpenShift on OpenStack – no-brainer on-prem solution

I have to admit, it has taken me a while to produce this next article. Today, however it’s about to change and I am happy to introduce on this blog OpenShift on OpenStack – no

OpenStack mini-Summit in Triangle

We have a unique opportunity to bring the brightest OpenStack, Cloud and Open Infrastructure engineers, consultants and field experts to Triangle area between February 4th – 6th 2019. Pl

BMaaS Part 3: Multi-tenancy

So far we have learned how to configure and provision baremetal nodes with OpenStack Ironic in Part 1 of the blog. We also configured our deployment to allow zero-touch discovery in Part 2. The nex